Guardrails AI

TL;DR

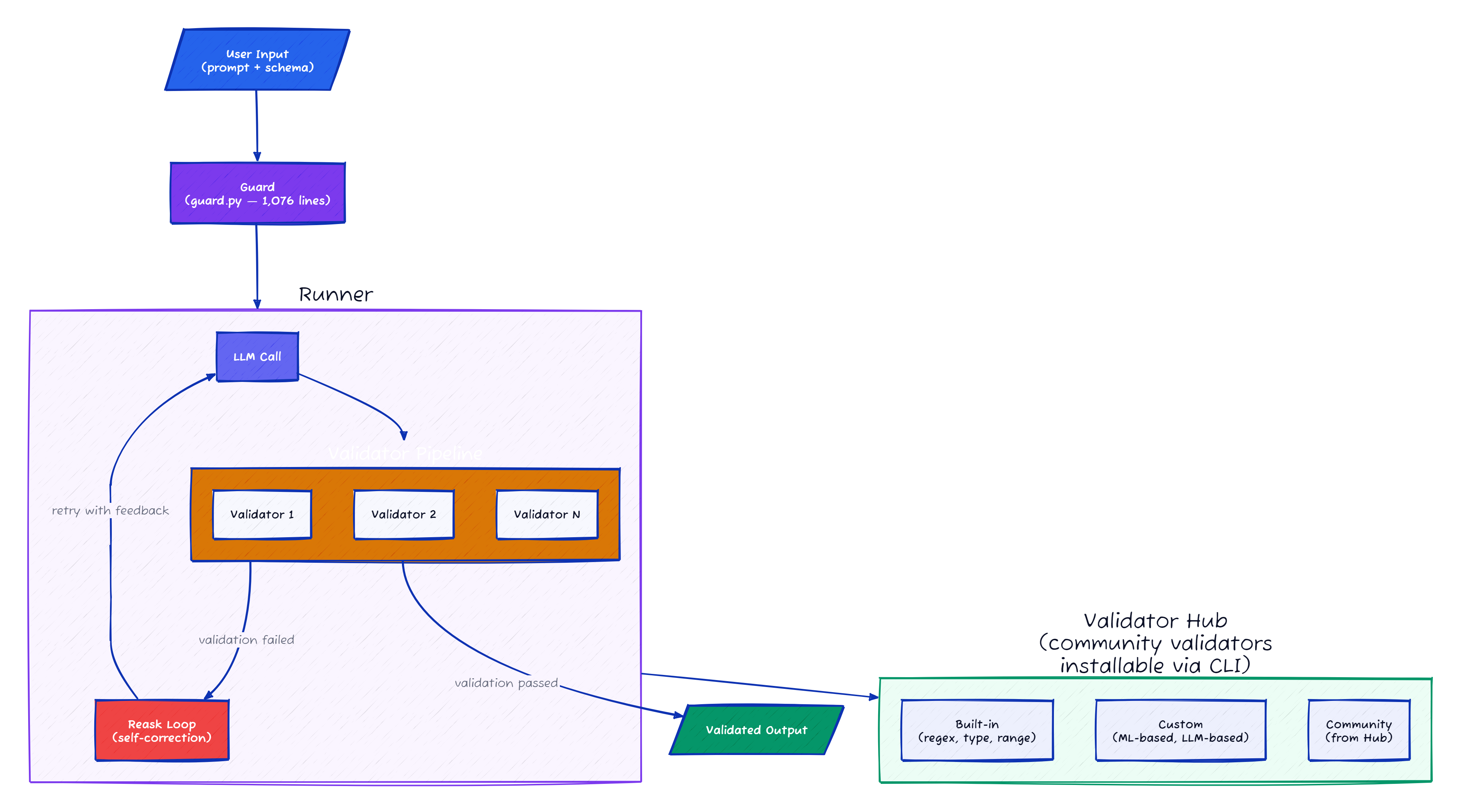

A middleware layer between your code and the LLM that enforces output contracts. Wraps LLM API calls, validates output against schemas, and optionally re-asks if validation fails. Closer to a package manager with a validation engine than a security tool.

Key Finding: Validator Hub, reask self-correction loop, 8 OnFailAction variants